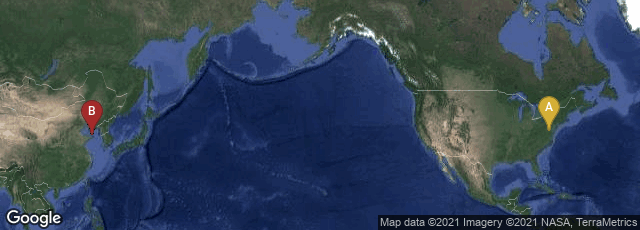

A: Washington, District of Columbia, United States, B: China

On August 29, 2014 the Internet Archive announced that data mining and visualization expert Kalev Leetaru, Yahoo Fellow at Georgetown University, extracted over 14 million images from two million Internet Archive public domain eBooks spanning over 500 years of content. Of the 14 million images, 2.6 million were uploaded to Flickr, the image-sharing site owned by Yahoo, with a plan to upload more in the near future.

Also on August 29, 2014 BBC.com carried a story entitled "Millions of historic images posted to Flickr," by Leo Kelion, Technology desk editor, from which I quote:

"Mr Leetaru said digitisation projects had so far focused on words and ignored pictures.

" 'For all these years all the libraries have been digitising their books, but they have been putting them up as PDFs or text searchable works,' he told the BBC.

"They have been focusing on the books as a collection of words. This inverts that. . . .

"To achieve his goal, Mr Leetaru wrote his own software to work around the way the books had originally been digitised.

"The Internet Archive had used an optical character recognition (OCR) program to analyse each of its 600 million scanned pages in order to convert the image of each word into searchable text.

"As part of the process, the software recognised which parts of a page were pictures in order to discard them.

"Mr Leetaru's code used this information to go back to the original scans, extract the regions the OCR program had ignored, and then save each one as a separate file in the Jpeg picture format.

"The software also copied the caption for each image and the text from the paragraphs immediately preceding and following it in the book.

"Each Jpeg and its associated text was then posted to a new Flickr page, allowing the public to hunt through the vast catalogue using the site's search tool. . . ."